Now, this was a week in which some really important work was

done.

Last week, I wrote about the roadblocks I was facing with writing

my own game engine – guaranteeing a smooth game loop, constant frame rate, and float

precision.

While it is quite easy to build a functional game loop, it is

harder to build one that does not break under stress. Somehow my windows

framework wasn’t sending regular updates to my main loop, and I spent quite a

while scratching my head trying to figure it out.

However, time is precious and it was running out. I had to

make a decision, and make it quick. I chose to jump into Unreal and port over

all my code into Unreal 4.16.

Jumping to Unreal

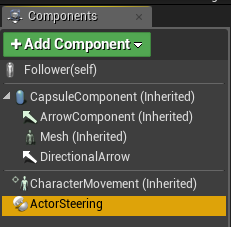

I wanted to build a follower behavior, but I also wanted to

build it right. So, I made sure to have a component-based architecture from the

get-go, and made sure to have the steering behaviors as Actor Components, so

that they could be attached to any actor and be reused.

I wanted to build a follower behavior, but I also wanted to

build it right. So, I made sure to have a component-based architecture from the

get-go, and made sure to have the steering behaviors as Actor Components, so

that they could be attached to any actor and be reused.

The Actor Steering component is a C++ component which has

its functions exposed to blueprints. This is done so that scripters can take

advantage of this and integrate it into their scripting workflow.

The code is the same as demonstrated in earlier posts. The

only difference is using Unreal’s FVector class instead of our own custom

vector, and getting the location, velocity, etc from the parent actor. Here is

what Arrive looks like in Unreal (look at last week’s post to see how it looked

like in the custom engine):

FVector UActorSteeringComponent::Arrive(const FVector& Target)

{

AActor* Owner = GetOwner();

FVector ToTarget = Target - Owner->GetActorLocation();

float Distance = ToTarget.Size();

if (Distance > 0)

{

float Speed = Distance / DecelerationCoefficient;

Speed = FMath::Min(Speed, mpMovementComponent->GetMaxSpeed());

FVector DesiredVelocity = ToTarget / Distance * Speed;

return DesiredVelocity - Owner->GetVelocity();

}

return FVector(0, 0,

0);

}

New behaviors and improvements to old ones

The benefits of jumping to Unreal were immediate. I was able

to bang out 3 new behaviors:

Pursuit

Given a target Actor, the source Actor will predict the

future position of the target (given a lookahead time), and will Seek towards

that.

FVector UActorSteeringComponent::Pursuit(const AActor* TargetActor)

{

AActor* Owner = GetOwner();

FVector TargetActorLocation = TargetActor->GetActorLocation();

FVector TargetActorVelocity = TargetActor->GetVelocity();

FVector ToTargetActor = TargetActorLocation - Owner->GetActorLocation();

float LookAheadTime = (ToTargetActor.Size() / mpMovementComponent->GetMaxSpeed() + TargetActorVelocity.Size()) / LookAheadTimeModifier;

return Seek(TargetActorLocation + TargetActorVelocity * LookAheadTime);

}

Evade

Similar to Pursuit, but the source Actor will Flee from the

predicted future position of the target.

FVector UActorSteeringComponent::Evade(const AActor* TargetActor, float TriggerDistance)

{

AActor* Owner = GetOwner();

FVector TargetActorLocation = TargetActor->GetActorLocation();

FVector TargetActorVelocity = TargetActor->GetVelocity();

FVector ToTargetActor = TargetActorLocation - Owner->GetActorLocation();

if (TriggerDistance < 0 || ToTargetActor.SizeSquared() <= TriggerDistance * TriggerDistance)

{

float LookAheadTime = (ToTargetActor.Size() / mpMovementComponent->GetMaxSpeed() + TargetActorVelocity.Size()) / LookAheadTimeModifier;

return Flee(TargetActorLocation + TargetActorVelocity * LookAheadTime);

}

return FVector(0, 0,

0);

}

Wander

This simulates a random walk. The problem with true

randomness is that it can be extremely unpredictable and unrealistic. The

solution to this is to add small variations of force to force the Actor off its

path.

FVector UActorSteeringComponent::Wander(float DeltaTime)

{

float Jitter = WanderJitter * DeltaTime;

mWanderTarget += FVector(RandomClamped() * Jitter, RandomClamped() * Jitter, 0);

mWanderTarget.Normalize();

mWanderTarget *= WanderRadius;

FVector Target = mWanderTarget + FVector(WanderDistance, 0, 0);

Target = GetOwner()->GetTransform().TransformPosition(Target);

FVector ToPos = Target - GetOwner()->GetActorLocation();

ToPos.Normalize();

FVector DesiredVelocity = ToPos * WanderMaxSpeed;

return DesiredVelocity - GetOwner()->GetVelocity();

}

Blueprints

Since the core functionality is being done in code, we

expose the interfaces and adjustable variables to the Unreal Editor using decorators:

Since the core functionality is being done in code, we

expose the interfaces and adjustable variables to the Unreal Editor using decorators:

UPROPERTY(EditAnywhere, BlueprintReadWrite, Category = "Steering")

float DecelerationCoefficient;

UFUNCTION(BlueprintCallable, Category = "Steering")

FVector Arrive(const FVector& Target);

And now not only can we configure all of these variables in

the editor, but we can build any set of complex behaviors from these simple

behaviors, from script.

We'll probably see obstacle avoidance next week.

Find the source code here: https://github.com/GTAddict/UnrealAIPlugin/

Find the source code here: https://github.com/GTAddict/UnrealAIPlugin/

Comments